HONGKONG, February 10th 2026 —— CIC extends our warmest congratulations to Axera AI Semiconductor Co., Ltd. (00600. HK) on its successful listing on the Hong Kong Stock Exchange (HKEX) today.

CIC's Collaborative Journey to the Listing

Throughout the listing process, CIC provided end-to-end support to the company and its sponsors:

Drafted and Compiled the industry overview chapter of the prospectus, laying a solid research foundation for the formal listing application.

Assisted in responding to inquiries from regulatory authorities, ensuring comprehensive and accurate disclosure.

Refined listing application materials continuously to meet stringent market and regulatory requirements.

Axera AI – The First HKEX-listed Edge AI Chip Enterprise

Axera AI, established in 2019, is a leading supplier of artificial intelligence inference chips, dedicated to building high-performance perception and computing platforms for AI applications in edge computing and terminal devices. As the first edge AI chip enterprise listed on the HKEX, the company has achieved remarkable results in its IPO and industrial layout, becoming a benchmark enterprise in the global edge and end-side AI inference chip track.

2026 HKEX IPO Financing & Cornerstone Investor Lineup

Axera AI raised a total of HK$2.961 billion in this HKEX listing, with a strong lineup of 16 cornerstone investors providing solid value endorsement. The cornerstone investors include both domestic and international industrial players and investment institutions, such as Will Semiconductor Hong Kong (affiliated to OmniVision Technologies), Xinma Garment International (affiliated to Youngor Group), JSC International Investment Fund SPC, NGS Super Pty Limited, Desay SV Automotive Singapore Pte. Ltd. (affiliated to Desay SV), as well as Factorial Master Fund, Hel Ved Master Fund, Valliance Asset Management Limited, Mingshan Capital, etc. In total, these investors subscribed for approximately US$185 million worth of the offered shares, fully demonstrating the capital market’s recognition of Axera AI’s core competitiveness and industry development potential.

Core Technological Pillars

Axera AI’s leading market position is underpinned by its three core technological pillars, which form a complete and competitive technical system for AI inference chip research and development and application:

Axera Neutron: A dedicated processing architecture with mixed-precision NPU, which achieves outstanding AI inference efficiency through advanced mixed-precision computing.

Axera Proton: The world’s first commercially mass-produced AI-ISP (AI Image Signal Processor), which significantly improves the quality of visual perception.

Comprehensive auxiliary toolchain: Including the Pulsar2 toolchain and Software Development Kit (SDK), which ensure the efficient deployment and operation of AI inference in diverse scenarios.

Commercial Layout & Market Position

Since its establishment, Axera AI has focused on transforming advanced technologies into market-validated products, and has successfully achieved commercial implementation in multiple scenarios including visual terminal computing, intelligent vehicles and edge AI inference. As of September 30, 2025, Axera AI has shipped more than 165 million SoCs in total.

According to the research data of CIC, Axera AI has secured a prominent market position in the global and Chinese AI inference chip industry in 2024:

The 5th largest global supplier of visual end-side AI inference chips by shipment volume.

The 2nd largest domestic supplier of intelligent driving SoCs in China by the sales volume of SoC-equipped intelligent vehicles.

The 3rd largest supplier in China’s edge AI inference field by shipment volume.

Global Edge & End-Side AI Inference Chip Market Research & Industry Overview (By CIC)

Against the backdrop of the breakthrough development of AI large models and the revolutionary transformation of AI deployment modes, the global edge and end-side AI inference chip industry has entered a period of high-speed growth.

CIC conducted a systematic and in-depth research on the industry, covering the development evolution of AI deployment modes, the core value of AI inference chips, market size and growth trends, and sorted out the key driving factors and future development trends of the industry, providing authoritative data and research support for the capital market and industry practitioners.

The Rise of Edge & End-Side AI

In recent years, AI large models (e.g., Large Language Models (LLM), Vision-Language Models (VLM), and Vision-Language-Action Models (VLA)) have achieved breakthrough progress, providing a strong driving force for the future development of AI. These models have rapidly penetrated into multiple application scenarios by supporting important functions such as AI-generated content, image recognition, content recommendation, sales forecasting and financial risk management, expanding the scope of AI applications. AI has thus evolved from an auxiliary tool to the "nervous system" of intelligent devices, bringing cutting-edge technology within reach for end users.

In the past, AI large models faced extremely high deployment thresholds due to their huge computing and storage requirements, and could only rely on expensive cloud infrastructure equipped with professional hardware and high bandwidth. Since 2024, with the improvement of end-side and edge computing capabilities, the popularization of lightweight AI models and the development of open-source LLMs, the AI deployment mode has undergone a revolutionary transformation. These technological advances have significantly reduced deployment and bandwidth costs, enabling AI large models to run locally on edge and terminal devices. This transformation has overcome the challenges of cost, network dependence and geographical restrictions in the past deployment of AI large models, empowered a wider range of intelligent devices, and accelerated the popularization of AI.

AI Chips – The Core Driving Force of AI Computing

To handle increasingly complex computing tasks, the booming AI market has an increasingly urgent demand for higher computing power, making AI chips a key driving force for future technological progress. Designed specifically for processing various AI workloads, AI chips are the pillar of AI development. By optimizing the parallel processing architecture and power efficiency design, these chips improve the efficiency of large-scale parallel computing.

Training and inference are the two major computing tasks of AI chips. Before practical application, training requires processing massive data and optimizing parameters to build models. Therefore, in the early stage of the AI SoC industry development, training was the main focus. However, with the improvement of the performance and practicality of AI models (especially LLMs), the demand for AI chips has expanded, and the industry now focuses more on practical applications, making the importance of AI inference chips grow day by day.

AI inference chips can be deployed in cloud, edge and end-side scenarios, and each scenario has different customized requirements for chip design:

Cloud AI inference chips: Usually used in data centers to handle large-scale, high-density and high-concurrency centralized inference tasks, with priority given to high computing power, wide applicability, flexibility and scalability.

Edge AI inference chips: Deployed in edge servers, gateways or base stations close to data sources to perform real-time local inference, requiring a careful balance between high performance and power consumption to ensure low latency, data security and operational stability.

End-side AI inference chips: Directly applied to terminal devices such as consumer electronic products (e.g., smartphones), intelligent vehicles and smart home appliances, requiring strict optimization to balance miniaturization, ultra-low power consumption and effective inference performance to support stable long-term use in different environments.

AI Inference Chip Market Size (Global & China)

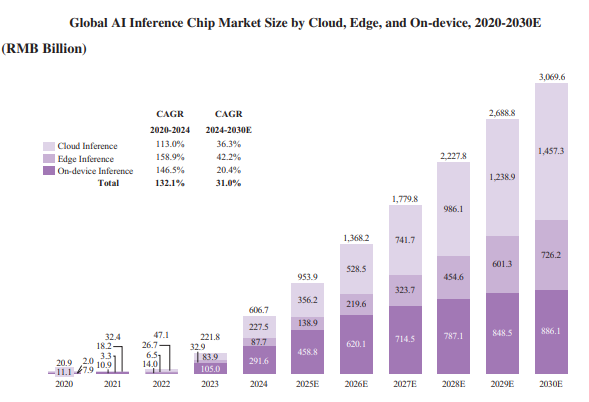

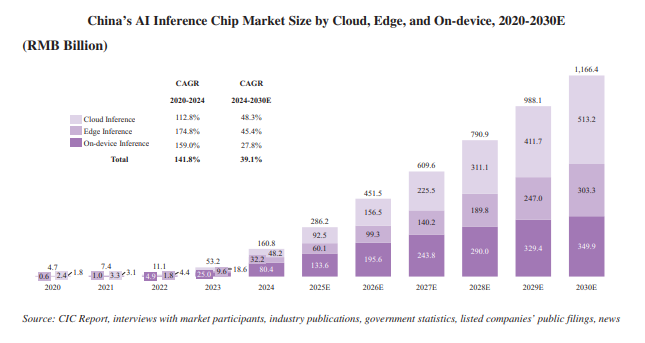

Driven by the shift of AI inference tasks from a cloud-centric model to a more decentralized model covering cloud, edge and terminal devices, the demand for edge and end-side AI inference has surged, thus accelerating the popularization of global and Chinese edge and end-side AI chips. CIC has released the latest market size data and growth forecasts for the global and Chinese AI inference chip markets (2020-2030), with core data as follows:

Market Size

Global market: The total scale reached 606.7 billion yuan, of which cloud inference, edge inference and end-side inference chips accounted for 227.5 billion yuan, 87.7 billion yuan and 291.6 billion yuan respectively.

Chinese market: The total scale reached 160.8 billion yuan, of which cloud inference, edge inference and end-side inference chips accounted for 48.2 billion yuan, 32.2 billion yuan and 80.4 billion yuan respectively.

Key Industry Drivers & Development Trends

Based on in-depth industry research and data analysis, CIC identified three core driving factors and development trends that will boost the rapid development of the global AI inference chip industry, especially the edge and end-side segments, in the next few years:

1. The Growth of AI Intelligent Devices

Open-source models such as DeepSeek support low-cost and high-performance end-side AI, enabling smooth multi-modal interaction on terminal and edge devices through voice, touch, gesture, facial expressions and other methods. The improvement of this capability has reduced deployment costs, prompted more enterprises to adopt AI applications, and thus driven the demand for edge and end-side AI inference chips. According to CIC’s data, the global penetration rate of AI intelligent devices increased from less than 1.0% in 2020 to 9.4% in 2024, and is expected to exceed 44.0% by 2030. This continuous improvement has boosted the demand for edge and end-side AI inference computing, making edge and end-side AI inference chips a key driving force for this intelligent transformation.

2. Surge in Data Volume & Demand for Low Latency

In high real-time application scenarios such as industrial control, traffic monitoring, assisted driving, robot interaction and safety feedback, devices require millisecond-level response speeds for data processing. The traditional cloud architecture is limited by network bandwidth, communication latency and computing resource scheduling, and it is difficult to maintain stable real-time response and continuous inference. By deploying AI inference chips at edge nodes close to data sources, fast local processing, real-time event recognition and multi-device collaborative response can be achieved, effectively supporting the execution of high-frequency and low-error-rate tasks. With the increasing intelligence of devices, edge inference chips have become the hardware infrastructure to meet the needs of high real-time computing scenarios, and their deployment scope is expanding rapidly.

3. Data Compliance Promotes Localized Processing

With the acceleration of digitalization, data has evolved from a technical level to a core strategic asset. In response, governments around the world have issued increasingly stringent regulatory policies. Enterprises now face greater regulatory pressure when processing sensitive data, especially in the fields of cross-border data transmission, personal data processing and automated decision-making. For industries with high data sensitivity such as healthcare, finance and government, localized inference has become a practical solution to balance operational efficiency and regulatory compliance. Against this background, edge and end-side AI inference chips have become important infrastructure for localized intelligent computing, and their advantages in real-time response, closed-loop data management and security have greatly driven their ability to meet the ever-changing compliance requirements.

As a seasoned Industry Consultant, CIC offers services such as market sizing, competitive analysis, and enterprise value verification, etc., with global experience in advising "first-in-sector" IPOs in the AI and Intelligent Manufacturing sectors.

From from IPO preparation to listing hearings, CIC unearths the true intrinsic value of enterprises and translates it into actionable insights for successful capital market landing. Prior to Axera AI, CIC has supported leading enterprises in successful listings both domestically and overseas, including MiniMax, Biren Technology, Horizon Robotics and Baidu, etc.

CIC Reports are now available on Bloomberg and FactSet portals.

About CIC

CIC is a professional consulting firm offering tailored end-to-end support across the full investment and financing lifecycle. The firm boasts a world-leading track record in guiding landmark first-in-sector IPOs across global markets, alongside unrivaled reach and in-depth coverage capabilities across specialized niche market segments.

CIC helps enterprises refine scalable business models and craft compelling capital narratives to enable seamless access to global capital markets, while serving as a trusted due diligence partner to investment institutions. It delivers granular industry insights and direct access to subject matter experts, empowering clients to identify high-value opportunities and mitigate critical risks effectively.

Media Contact

marketing@cninsights.com